Latency - when it is and isn't important, and how to deal with it when it is

What is Latency?

Because latency is one of the first topics people ask about our interfaces, here are a few general points about it - where it comes from, when it is and isn't important, and how to work around it.

Latency is just the time it takes something going into your audio system to come out your speakers. It always takes *some* processing time, whether you're playing a MIDI instrument, singing into a mic, playing a guitar or bass, DJ-ing... anything.

People usually refer to the speed of sound for perspective when explaining latency. It takes roughly 5 milliseconds (ms) - that's 5/1000ths of a second - for sound to reach your ears from a guitar amp about 5 feet away from you. Or 3ms for the sound from nearfield monitors if they're a yard away (a typical placement).

That's based on the speed of sound, which for working purposes is close enough to 1 foot per ms. It's actually about 1.1ms (1100 feet per second) depending on atmospheric conditions, elevation, etc.

Here's a more real-world way to understand how short the times we're talking about are. Look at a clock's second hand and count 1-2-3-4-5 each second (you may also be able to hear a watch ticking at that speed). Each number is 200ms, 1/5 of a second. Now go "ticka-ticka ticka-ticka ticka-ticka ticka" at the same speed. Each tick and each -a is 100ms, 1/10 of a second.

It's not easy to divide that in half in your head to get to 50ms. Depending on their complexity, two sounds closer than about 50ms apart are perceived as happening at the same time. This is called the Haas Integration Zone, named after acoustician Helmut Haas.

Remember: the whole system can't have lower latency than its slowest point!

So why does my System have Latency?

Latency comes from several places:

Digital Converter Latency

It takes a couple of milliseconds for analog sounds coming in to be digitized, and on the way out for digital code to be converted back to sound (or more accurately, to an electric waveform to drive your speakers).

Even our interfaces that support multiple computers have only a fixed 3ms of latency from analog in to analog out. Considering the buffering it takes to keep those machines in sync, our engineers are justifiably proud of that spec.

Does converter latency matter? Not really. This is the spec people ask about, but in my opinion it's not the relevant one. Very, very few people are sensitive to a few ms of latency, never mind just 3, even when playing percussive sounds.

Now, a singer or instrumentalist may hear the direct sound "phasing" against the sound coming out his/her headphones a few ms later. But lower latency doesn't really make much of a difference to that; it's just life in the digital world... yet lots of great music is created in digital systems every day.

Computer Latency.

You will notice the latency routing sound in and out a computer, but there are workarounds that eliminate the issue. It's a few times larger than converter latency.

Does computer latency matter? Not when you're mixing, but it does when you're recording (but you can bypass the computer when recording - please see "direct monitoring" below). And when you're playing virtual instruments running on the computer, you want them to be as responsive to your playing as possible.

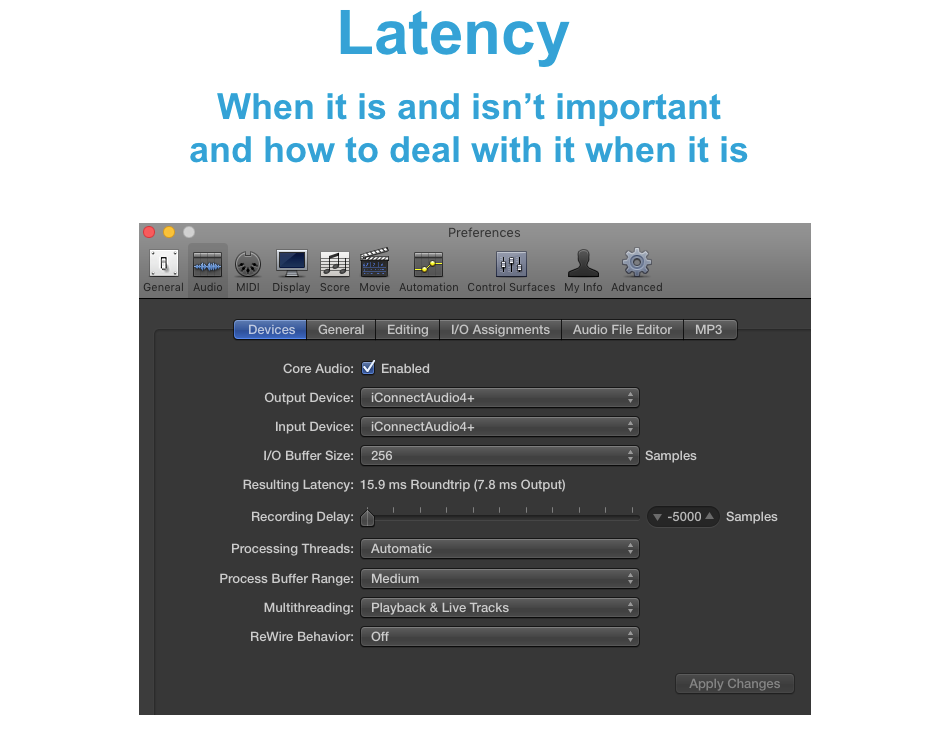

Dealing with computer latency. DAWs always have an I/O (in/out) buffer setting to give them time for processing, mixing, and so on. The larger the buffer, the higher the latency; and up to a point, the lower the amount of computer horsepower it takes to run a session.

Obviously you want to set as low a buffer as possible, and the white-coat scientific method of find that is simply to keep lowering your setting until the sound starts messing up. The more processing you have going on, the higher a setting you'll need.

Computers and sessions are all different, so are DAWs... and for that matter so are individual musicians' sensitivities. But a DAW buffer setting of 256 samples is completely playable for most musicians, you can feel the difference between 128 and 256 samples but it's not major, and 512 samples might be in the annoying range.

A 256-sample audio interface buffer in Logic Pro is a 14.8ms round trip (7.6ms output if you're playing virtual instruments); expect similar performance in other DAWs

Some other hints: you can bypass computer-intensive plug-ins while recording to allow a lower buffer setting, then raise it when you turn them back on for mixing (when you don't care about latency, since you're not playing). And most DAWs now have a processor delay compensation feature to align live sound with recorded sound going through plug-ins.

Also, some virtual instruments, such as Native Instruments' Kontakt sampler, have buffer settings that you want to minimize. A huge sampled orchestra running off standard hard drives is going to require a larger buffer than a couple of instruments streaming off solid-state drives.

MIDI Latency

Every device you send MIDI to adds a little latency. While it's better to put every MIDI device on its own interface port than to daisychain, MIDI latency is one of those things you just don't worry about (it is what it is - since MIDI started in 1983 - and it shouldn't be a problem). The important consideration with MIDI is solid timing rather than latency, and of course iConnectivity is known for that.

MIDI Program/Sound Latency

Samples and synth programs take a little while to "speak." If you're playing a smooth pad or string program, some latency feels normal. But try to play a sampled drumset with your DAW buffer set to 1024 samples, and you'll get really frustrated at the horrible timing. There's not much to say about this!

How can I Avoid Latency when Recording?

Direct Monitoring.

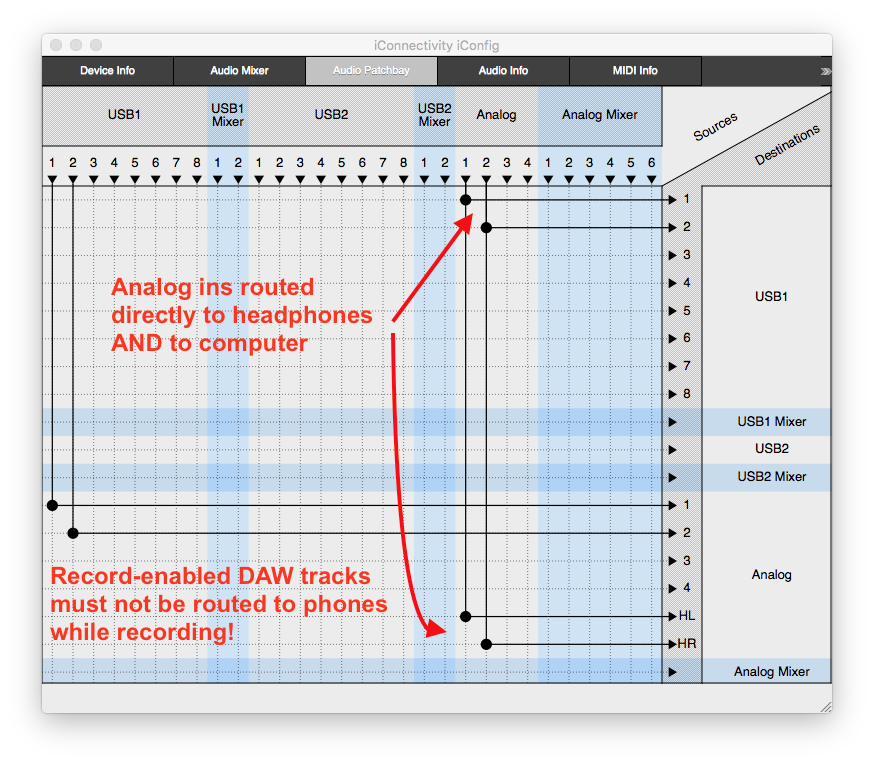

The way to deal with computer latency when you're recording is just to bypass it. This is really easy to set up in iConfig. Everything other than the routings we're discussing have been cleared from the audio patchbay to make it easy to understand.

Final Advice

Don't worry about interface and MIDI latency! set as low a buffer as you can get away with, in your DAW and use direct monitoring when you're recording.